Much like the first time I encountered an LLM (back in the days of GPT-2 and AI Dungeon) and was left utterly stunned, wondering whether I’d fallen victim to some kind of elaborate joke, there comes a moment, if you work with language models long enough, when the illusion breaks.

It’s not a spectacular or headline-grabbing moment. It has nothing to do with clever prompts, benchmarks, or unbelievable hallucinations. It’s the encounter with catastrophic, at-first-glance incomprehensible errors.

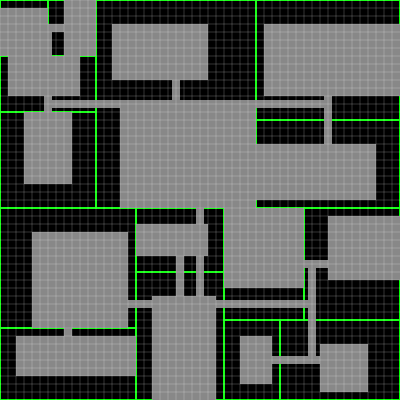

I’ve run into these absolute failures of LLMs when dealing with problems that are more structurally demanding than usual. For instance: a procedural generator for roguelike levels where the model was supposed to respect certain invariants. Or a combat system accounting for flanking and geometric constraints, involving angles and simultaneous states. This is where I found the model beginning to produce utterly incoherent results. And it’s not that it fails on some detail; it loses the thread of the system entirely.

What’s interesting, starting from there, is not the frustration. When your profile is technical and you’re not staking your future on the roulette wheel of so-called “vibe coding,” you have more than enough resources to fall back on, and in fact you often spend just as long writing a prompt that properly captures the business logic as you would programming it yourself. The interesting part, as I said, is the question: what kind of intelligence is this really, and why does it collapse precisely in these domains?

At first glance, one might say the problem is that it’s probabilistic rather than deterministic. That answer is correct, but it feels insufficient to me; the structure of the problem runs deeper. What I believe these limits reveal with striking clarity is that an LLM does not execute algorithms at inference time, nor does it maintain explicit, verifiable states that remain stable under transformation. What it does is generate sequences of text that are plausible given the preceding ones.

When we write code, and even when we’re still in the mental pre-coding phase, we operate with a notion of state that, however incomplete or fuzzy, has continuity. We can picture a grid, place elements, iterate through loops, detect inconsistencies, backtrack. If we’re building a turn-based RPG with a tile grid, we can run it in our imagination before writing a single line of code and think through the consequences of our design decisions. That internal simulation doesn’t need to be perfect to be useful, but it does need a certain stability, a capacity to maintain relationships between elements over time. That is precisely what allows us to design algorithms that respect global invariants, because even if we’re not literally executing the code in our heads, we’re constantly cross-checking the local against the global.

The language model, unlike us, does none of this. It has no persistent representation of the state it is describing, nor any internal mechanism that compels it to respect invariants across a sequence. Each token it generates is conditioned by the preceding ones, yes, but that “memory” resembles a statistical echo more than anything else. It is not a structured state. That is why it can produce code that looks locally reasonable but breaks down globally. The LLM lacks our ability to operate on the system it describes; what it does instead is improvise a coherent narrative about how that system ought to behave. It is generating a plausible description of execution, not execution itself.

This becomes especially evident the moment you step outside domains where tolerance for error is high. For CRUD tasks, simple scripting, or API integration, where patterns are hyper-standardised and failures carry no serious systemic consequences, the model performs surprisingly well, because it suffices for the solution to resemble something correct, and in these domains correct solutions tend to look quite alike. In other words, in hyper-standardised domains, the distribution of correct solutions is narrow and densely represented in the training data, so the probabilistic approximation converges easily to something valid. But the moment you enter discrete spaces with hard constraints, the distribution of correct solutions becomes a set of negligible measure within the space of plausible responses. As soon as you need to count elements, maintain connectivity in a map, or guarantee that a set of conditions holds in every case, that’s where probabilistic approximation stops being enough. There are domains where there is no room for plausibility. Either there are ten elements, or there aren’t. Either the graph is connected, or it isn’t.

The counting example is particularly instructive in this regard. One of the hardest things you can ask a model to do is count, and we’ve seen plenty of memes about. Models have to be patched from the outside to do it reliably, regardless of how large or sophisticated they are. And this tells us a great deal about their inner workings. Counting requires maintaining a discrete state, incrementing it reliably, and verifying at the end that the cardinality is as expected. A language model doesn’t work that way: it generates a sequence that sounds like someone listing items, but it has no internal counter guaranteeing anything. It works in short cases because it has seen millions of examples with strongly marked patterns, but there is no structural guarantee behind it.

Obviously, by 2026 the counting example is trivial in the sense that we have fewer and fewer “pure LLMs” and more and more “LLMs with tools.” Systems deployed in production are not just autoregressive transformers; they are systems augmented with code execution, agentic loops, external result feedback, and iterative verification. When a model with access to a Python interpreter needs to count elements, it simply writes and runs len(list). But I bring up the counting example precisely to highlight problems that are structural to LLMs.

If we return to problems closer to systems design, such as procedurally generating a city by partitioning space into blocks, or computing combat bonuses based on angles and relative positions, exactly the same phenomenon appears at a different scale. When asked general questions, the model can suggest well-known algorithms (a BSP for space partitioning, for example), because that falls within its capacity for pattern retrieval. But when asked to instantiate that algorithm in a concrete context, with specific constraints and a globally coherent result, it stops operating on a structure and reverts to operating on words. The result ends up being a description that may look like an algorithm, but won’t behave correctly as one.

One might argue that the upper bound of what’s achievable can be raised by the external tools and agents mentioned earlier, but delegating execution to deterministic systems merely externalises the problem rather than eliminating the limitation. Moreover, the cost of that orchestration can easily outweigh that of a direct implementation.

There is an important nuance here that we cannot ignore: the role of the LLM is different from the one we intuitively want to assign it. It is very good at exploring possibility spaces, suggesting approaches, highlighting trade-offs, or even detecting local inconsistencies in already-written code. That is, it works well at everything that involves expanding and stress-testing the space of ideas. What it does not do well is closing that space; guaranteeing that a concrete solution meets all the imposed conditions. This is precisely why, in serious software development, it is useful for creating functional prototypes but gets stuck and ceases to be useful when there is complex business logic to converge towards: at that point, specifying every small detail so the LLM can generate code that meets the specifications is a waste of time.

This also explains another fairly common phenomenon: when you ask an LLM for ideas to extend a reasonably well-defined videogame or system, it tends to propose things that, while they may sound interesting, are entirely impractical. It produces ideas that are completely decoupled from the real constraints of the system. The model doesn’t understand implementation cost, emergent complexity, or the dependencies each new component introduces. It operates in a space of concepts where adding “emergent AI-driven factions” to a game is just one more variation within a genre, and not a full architectural rewrite.

The gap, then, lies between working in a space of words and working in a space of systems. In the former, combinations are evaluated by their plausibility and novelty; in the latter, by their feasibility and internal coherence. The human developer, even implicitly, is constantly projecting ideas onto a concrete system and assessing how well they fit. The model, unless explicitly forced to, lacks that anchoring. It has no grounding mechanism in a verifiable external state.

All of this gives rise to a considerably more useful way of understanding the limits of LLMs in software architecture, as well as establishing what their appropriate use actually is. Beyond the optimisation of plausibility over formal correctness, their usefulness depends on whether the problem at hand requires exploration or guarantee. When the priority is to open up the solution space, challenge assumptions, or find alternative approaches, the model can act as a surprisingly powerful amplifier of thought. But where what matters is maintaining invariants, ensuring global behaviours, or working with discrete structures with no margin for error, the responsibility must fall to deterministic systems.

This is not an accidental limitation that will vanish with larger models. It is not a question of scale, it is a question of architecture. An autoregressive model that neither executes code nor interacts with an external state can hardly guarantee global invariants, no matter how much it grows in size. And this is where I draw the line on the idea that software development or software architecture is about to disappear, as some AI company CEOs would have us believe, along with others who have already witnessed the disasters that result from entrusting their repositories or email to their LLMs. The limits of LLMs in this regard are not a matter of insufficient power: they are structural, rooted in their most fundamental nature. Does that mean they’ll go away? Certainly not! It’s simply that, as so often happens, the hype doesn’t understand what is actually going on. Understanding the limitations of LLMs can help us refine how we use them. As I’ve described, they are extremely useful for generating possibilities, but prove to be a profoundly inadequate system when oriented towards the validation of realities.